Lecture 5

Ashish Rastogi, Keshav Kunal

In this lecture, we will discuss games in extensive form, define the notion of a tree

and subgame perfect equilibria, and understand some underlying properties of the

Nash equilibrium in such games. Next, we will broach the theory of utility.

An extensive game is an explicit description of the sequential structure of

decision problems encountered by the players in a strategic situation. The model

allows us to study solutions in which each player can consider her plan of action

not only at the beginning of the game, but also at any point of time at which he has

to make a decision.

A general model of an extensive game allows each player, when making his choices,

to be imperfectly informed about what has happened in the past. However, in this

lecture, we limit our attention to the simpler model where each player is perfectly

informed about the players' previous actions at each point in the game.

An extensive game is a detailed description of the sequential structure of the decision problems encountered by the players in a strategic situation. There is perfect information in such a game if each player, when making any decision, is perfectly

informed of all the events that have previously occurred and of the pay-offs

associated when the game ends. Further, players take turns in making moves, and

the number of moves in a game is finite.

Consider the game of chess. There are two players white and black who move alternatively. At any stage in the game, depending upon the board position, each player has a finite number of moves to choose from. The board position depends upon the previous moves of both white and black. When the game ends, there are three possible outcomes: white wins, black wins, draw. In modeling this game, let us assume that a player remembers (is perfectly informed) of all previous moves. Further, let us assume that the total number of moves is bounded by a maximum, after which, if no result is obtained, a draw would be forced.

Such a game of chess may be modeled as a tree. The root node corresponds to the initial state of the game. At the beginning of the game, it is white's turn to move.

At any time, the state of the chess board is determined by the sequence of moves

that have been made from the starting of the game. The different edges emanating

from the root correspond to possible first moves by white, and these edges

are incident on nodes that correspond to the board position (state) achieved by the

move. From each of these nodes, then there are edges that emanate and correspond to

a possible move by black, and these edges are incident on nodes that then correspond to the board position after a move by white and a move by black. The game tree is constructed in such a manner, and the leaves correspond to a situation where either the game has ended (it has resulted in a white win or a black win) or the maximum number of moves have been player (in which case, we declare it a draw).

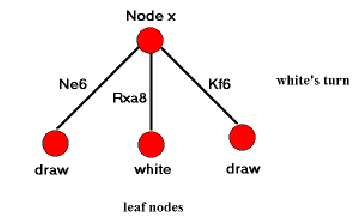

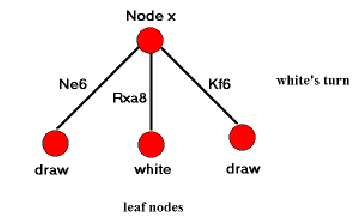

Figure 1:

A node one level above the leaf nodes in the tree for the game of chess. It is white's turn to move.

|

|

Hence, each leaf can be labeled with one of white, black or draw

corresponding to the outcome the leaf represents. Consider a node  that has as its children, only leaf nodes (Figure 1).

Suppose it is white's turn when the game is in the state corresponding to

node

that has as its children, only leaf nodes (Figure 1).

Suppose it is white's turn when the game is in the state corresponding to

node  . It is clear that once the game reaches this state, then white can

play Rxa8 (move the rook to the square

. It is clear that once the game reaches this state, then white can

play Rxa8 (move the rook to the square  ) and win the game. Hence, we can

label node

) and win the game. Hence, we can

label node  as white, because we know that once the game reaches node

as white, because we know that once the game reaches node  ,

white is sure to win. If at node

,

white is sure to win. If at node  , there are no children with label white, then white would look to draw, and in such a case node

, there are no children with label white, then white would look to draw, and in such a case node  can be labeled draw. Finally, if white can neither win nor draw from the board position at

can be labeled draw. Finally, if white can neither win nor draw from the board position at  , then white is sure to lose and we may label node

, then white is sure to lose and we may label node  as black.

In this manner, we can fold up the finite game-tree and label each node with one of

the tree labels depending on the labels of its children and the player who has her

move.

as black.

In this manner, we can fold up the finite game-tree and label each node with one of

the tree labels depending on the labels of its children and the player who has her

move.

This procedure is more formally described as Zermelo's Algorithm for solutions

of games in extensive form later in these scribes. Note that if the root node

is labeled with white, then white can force a win. Similarly, if it

is labeled draw or black, white would draw or lose

respectively.

The above analysis of the game of chess implies that chess is a trivial game because

the outcome may be determined even before the first move has been made. If such is

the case, then why is chess so interesting? Should there not, then, be a computer

that should always be able to defeat a human?

In our entire analysis, we assumed that the game tree was easily constructed and

available to us. We assumed that it was finite, and ignored its size. Now, let us

consider what would be the size of a typical game tree of chess. Let us assume that

the maximum number of moves is 64. The number of possible board positions is roughly

assuming that no pieces are killed. Hence, the number

of nodes in the game tree is extremely large for it to be completely stored in any

practical computer memory or human mind, and so the assumption that players have

complete information about the sequence of moves that have been played, and the

pay-offs at the leaf nodes turns out to be infeasible for the game of chess, which is why it is still interesting.

assuming that no pieces are killed. Hence, the number

of nodes in the game tree is extremely large for it to be completely stored in any

practical computer memory or human mind, and so the assumption that players have

complete information about the sequence of moves that have been played, and the

pay-offs at the leaf nodes turns out to be infeasible for the game of chess, which is why it is still interesting.

Consider the following two player game. Suppose that there are 10 sticks on the board. Two players (player 1 and 2) take turns alternately, and in each turn, a player may either pick 1 stick or pick 2 sticks. The player who picks the last stick wins. What would be the strategy of each player in this game?

Suppose player 1 adopts the following

strategy: at any stage, after player 1's move, the number of sticks left on board

are a multiple of 3. This strategy implies that starting with 10 sticks, player 1

picks up 1 stick (thus 9 sticks remain, which is a multiple of 3). Then, if player 2

picks up 1 stick, then player 1 picks up 2, and if player 2 picks up 2 sticks, then

player 1 picks up 1 (thus picking up a total of 3 sticks in one round of player 1 followed by player 2) which will ensure that after every move of player 1, the number of sticks is divisible by three. What does such a strategy ensure? Consider the last

but one round, player 1 would have played so that 3 sticks would be left on the house. In such a case, no matter what player 2 players, player 1 always has a winning move.

Simplified PickStick can also be modeled using a game tree. Each node in the

tree corresponds to a particular number of sticks left on board. The root node then

corresponds to 10 sticks. The leaf nodes correspond to no sticks left on the board

and the player who arrives at a leaf node wins. Two edges emanate from each

internal node, corresponding to picking 1 or 2 sticks. In such a case, consider a

node where 3 sticks are left, and it is player 2's turn. It is clear that if she picks 1 stick, then player 1 can pick 2 and win, and in the other case, if she picks 2 sticks, then player 1 can pick 1 and win. Therefore, we can label this node as one

where player 1 can definitely win. Building up the tree like this, we get the strategy mentioned in earlier paragraph.

In the generalized two player PickStick, each of the two players can pick 1 through  sticks, and initially there are

sticks, and initially there are  sticks on the board. As described earlier, a winning strategy corresponds to picking sticks such that the number of boards

left on the board are a multiple of

sticks on the board. As described earlier, a winning strategy corresponds to picking sticks such that the number of boards

left on the board are a multiple of  . Once again, a player who can ensure this strategy will necessarily win the game. In the simplified PickStick mentioned earlier, we have

. Once again, a player who can ensure this strategy will necessarily win the game. In the simplified PickStick mentioned earlier, we have  and

and  .

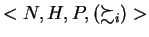

Mathematically, an extensive game with perfect information has the following components.

.

Mathematically, an extensive game with perfect information has the following components.

- A set

(the set of players).

(the set of players).

- A set

of sequences (finite or infinite) that satisfies the following three

properties.

of sequences (finite or infinite) that satisfies the following three

properties.

- The empty sequence

is a member of

is a member of  .

.

- If

(where

(where  may be infinite) and

may be infinite) and  then

then

.

.

- If an infinite sequence

satisfies

satisfies

for every positive integer

for every positive integer  then

then

.

.

(Each member of  is a history; each component of a history is an action taken by a player.) A history

is a history; each component of a history is an action taken by a player.) A history

is terminal if it is infinite or if there is no

is terminal if it is infinite or if there is no  such that

such that

. The set of terminal histories is denoted

. The set of terminal histories is denoted  .

.

- A function

that assigns to each nonterminal history (each member of

that assigns to each nonterminal history (each member of  ) a member of

) a member of  . (

. ( is the player function,

is the player function,  being the player who takes an action after history

being the player who takes an action after history  .)

.)

- For each player

a preference relation

a preference relation  on

on  (the preference relation of player

(the preference relation of player  .)

.)

Sometimes it is convenient to specify the structure of an extensive game without

specifying the player's preferences. We refer to a triple  whose components satisfy the first three conditions in definition as an extensive game form with perfect information.

whose components satisfy the first three conditions in definition as an extensive game form with perfect information.

If the set  of possible histories is finite then the game is

of possible histories is finite then the game is  . If the longest history is finite then the game has a finite horizon. Let

. If the longest history is finite then the game has a finite horizon. Let  be a history of length

be a history of length  ; we denote by

; we denote by  the history of length

the history of length  consisting of

consisting of  followed by

followed by  .

.

Throughout these scribes we refer to an extensive game with perfect information simply as an ``extensive game''. Further, we assume that the game is finite. We interpret such a game as follows. After any nonterminal history  , player

, player  chooses an action from the set

chooses an action from the set

The empty history is the starting point of the game; we sometimes refer to it as the initial history. At this point, player  chooses a member of

chooses a member of  .

For each possible choice

.

For each possible choice  from this set player

from this set player  subsequently chooses a member of the set

subsequently chooses a member of the set  ; this choice determines the next player to move, and so on. A history after which no more choices have to be made is terminal.

; this choice determines the next player to move, and so on. A history after which no more choices have to be made is terminal.

Consider the following variation of PickStick game described earlier. There are

sticks on the board initially. Three players

sticks on the board initially. Three players  ,

,  and

and  are playing the

game and take turns. Each player can pick up

are playing the

game and take turns. Each player can pick up  sticks (

sticks (

)

in one turn. The player that picks last wins the game. How would the players play

this game?

)

in one turn. The player that picks last wins the game. How would the players play

this game?

This game can be modeled in extensive form as:

- The set of players,

;

;

- The set of histories

where

where  is the

number of sticks picked (

is the

number of sticks picked (

) by the player who has the

) by the player who has the  th turn

(

th turn

( if

if  (mod 3),

(mod 3),  if

if  (mod 3),

(mod 3),  if

if  (mod 3).

(mod 3).

- For a terminal history

, let the length

, let the length  denote the total

number of actions in

denote the total

number of actions in  . Player

. Player  wins if she is the last to pick sticks, that is

wins if she is the last to pick sticks, that is

(mod 3). Define

(mod 3). Define

for

for

. Therefore we have

. Therefore we have  ,

,  and

and  as the set of histories

for which player

as the set of histories

for which player  ,

,  and

and  win respectively.

win respectively.

- The preference relation for player

,

,  is given as follows:

is given as follows:

- For

,

,

- For

and

and  or

or  ,

,

- Preference relation for player

and player

and player  (

( and

and  is defined similarly.

is defined similarly.

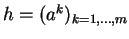

Two persons use the following procedure to share two desirable identical indivisible objects. One of them proposes an allocation, which the other either accepts or rejects. In the event of rejection, neither person receives either of the objects. Each person cares only about the number of objects he obtains.

An extensive game that models the individuals predicament is

where

where

;

;

consists of the ten histories

consists of the ten histories  , (2,0), (1,1), (0,2), ((2,0),

, (2,0), (1,1), (0,2), ((2,0),  ), ((2,0),

), ((2,0),  ), ((1,1),

), ((1,1),  ), ((1,1),

), ((1,1), ), ((0,2),

), ((0,2), ), ((0,2),

), ((0,2), );

);

and

and  for every nonterminal history

for every nonterminal history  .

.

Figure 2:

An extensive game that models the procedure for allocating two identical indivisible objects between two people.

|

|

A convenient representation of a game in extensive form is by the use of a tree.

Each node in this tree is marked by two labels: one, a history

to which that node correponds, and two, the player

to which that node correponds, and two, the player  who makes the

move if the game has a history

who makes the

move if the game has a history  . Edges emanating from a node with history

. Edges emanating from a node with history  are incident on nodes that correspond to histories

are incident on nodes that correspond to histories

where

where  is a move of the player

is a move of the player  . Finally, no edges emanate from the leaf

nodes and they are labeled with a pay-off vector

. Finally, no edges emanate from the leaf

nodes and they are labeled with a pay-off vector

.

.

Note that in this tree, a path from the root node to any other node represents a

history. In particular, the game will reach the state corresponding to a node  if

players follow edges corresponding to the unique path from the root node to

if

players follow edges corresponding to the unique path from the root node to  .

Further, observe that the progress of the game can be traced out as a unique path

from the root node to one of the leaf nodes. Every move of a player translates

into following an arc from one node to another node.

.

Further, observe that the progress of the game can be traced out as a unique path

from the root node to one of the leaf nodes. Every move of a player translates

into following an arc from one node to another node.

Figure 2 represents the game tree for the example given above.

Given a game in extensive form along with player pay-offs, assuming that the players

are rational and aware of each other's rationality, how will the game end? Which

player will win in such a case? In this section, we are interested in developing

solution concepts for games in extensive form. We begin by defining strategy in such

a game, and then define Nash equilibria and Subgame Perfect equilibrium

in this context.

A strategy of a player in an extensive game is a plan that specifies the action chosen by the player for every history after which it is his turn to move. Hence, the strategy function  for player

for player  is a function of the following form:

is a function of the following form:

where  is the set of histories where it is player

is the set of histories where it is player  's move, and

's move, and  is the action space for player

is the action space for player  . An important point here is that a strategy specifies the action chosen by a player for every history after which it is his turn to move, even for histories that, if the strategy is followed, are never reached. In other words, for each node in which it is player

. An important point here is that a strategy specifies the action chosen by a player for every history after which it is his turn to move, even for histories that, if the strategy is followed, are never reached. In other words, for each node in which it is player  's move, his strategy should choose one of the edges emanating from that node.

's move, his strategy should choose one of the edges emanating from that node.

So, for this example, a strategy of player  has to choose one of the edges from

has to choose one of the edges from

. A strategy of player

. A strategy of player  has to choose

has to choose  or

or  , corresponding to each of the

, corresponding to each of the  possible nodes in which it is his move and can be represented by a triple like

possible nodes in which it is his move and can be represented by a triple like  which implies that he chooses

which implies that he chooses  ,

, and

and  if he is at the left,middle or right node respectively.

if he is at the left,middle or right node respectively.

A strategy profile is a tuple consisting of a strategy of each player. In game tree terms, a strategy profile associates every node with an edge emanating from it. A possible strategy profile can be

We can use  to conveniently represent this strategy profile.

to conveniently represent this strategy profile.

A Nash equilibrium of an extensive game with perfect

information

is a strategy profile

is a strategy profile  such that for every player

such that for every player  we have

we have

Using this definition, the game in the example above has 9 Nash equilibria -  and

and  are two of them.Can you figure out the rest? Note that the total number of strategy profiles is

are two of them.Can you figure out the rest? Note that the total number of strategy profiles is

.

.

One of the reasons why this game has so many Nash equilibria is that the players are indifferent between strategies at some stage. For instance for the node  , player 2 does not prefer the move

, player 2 does not prefer the move  over

over  as they both lead to the same payoff

as they both lead to the same payoff  for him. Games like PickStick are more interesting in the sense that a certain strategy is always preferable over (or not preferable) over the other and we will deal with such games only(Payoffs which ensure such a property are called generic payoffs).

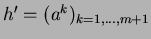

Consider another extensive game as modelled in Figure 3. This game has four Nash equilibria. One of them is given by

Can you figure out the others? We will discuss the other Nash equilibria in the remaining sections.

for him. Games like PickStick are more interesting in the sense that a certain strategy is always preferable over (or not preferable) over the other and we will deal with such games only(Payoffs which ensure such a property are called generic payoffs).

Consider another extensive game as modelled in Figure 3. This game has four Nash equilibria. One of them is given by

Can you figure out the others? We will discuss the other Nash equilibria in the remaining sections.

Figure 3:

Another extensive game

|

|

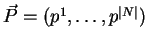

We will now describe Zermelo's Algorithm that will help us develop the intuitive

basis for subgame perfect equilibria. Suppose  is a node in the game tree.

Further, let it be

is a node in the game tree.

Further, let it be  's turn when the game has reached node

's turn when the game has reached node  . Zermelo's Algorithm

associates with the node

. Zermelo's Algorithm

associates with the node  , a pay-off vector

, a pay-off vector  (where

(where ![$p_x[j]$](img117.png) is the

pay-off of player

is the

pay-off of player  ,

, ![$j \in [n]$](img118.png) ) with the property that player

) with the property that player  (who has to

move when the game is at node

(who has to

move when the game is at node  ) can ensure a minimum pay-off of

) can ensure a minimum pay-off of ![$p_x[i]$](img119.png) .

.

The labeling of the nodes with these pay-off vectors occurs in the following manner:

- All leaf nodes correspond to outcomes of the game. Hence, pay-off vectors

for leaf nodes are part of the specification of the game.

- An internal node

, with player

, with player  to move, is marked with a pay-off

vector

to move, is marked with a pay-off

vector  that is the pay-off vector of that child which maximizes

player

that is the pay-off vector of that child which maximizes

player  's own payoff. Formally,

's own payoff. Formally,  =

=  such that

such that

is a child of

is a child of  and

and

![$p_y[i] = \max \{ p_z[i] \vert z$](img121.png) is a child of

is a child of  .

.

- Call the edges between nodes that share the same pay-off labels as blue edges.

For example, consider the game tree in Figure 3. The red nodes imply

player 1's turn and the blue nodes signify player 2's turn. Red arcs correspond

to moves made by player 1 and black arcs correspond to moves made by player 2.

For each of the leaves, a pair of values is given that is the pay-off to player 1

followed by player 2.

In this game, the Zermelo's tree assigns labels to internal nodes as shown in Figure 4. The root node is labeled with a pay-off vector  . This payoff can be achieved simply if the players follow the strategies corresponding to the unique path of blue edges from the root to a leaf node.

. This payoff can be achieved simply if the players follow the strategies corresponding to the unique path of blue edges from the root to a leaf node.

Figure 4:

Execution of the Zermelo's Algorithm.

|

|

After running Zermelo's Algorithm, corresponding to each node, we obtain a pay-off vector, and also, all internal nodes have exactly one blue edge

that emanates from them. These edges constitute a strategy profile. Note that this strategy profile will constitute a Nash equilibrium. This Nash equilibrium is called the Subgame Perfect equilibrium.It can be thought of as a more credible Nash equilibrium. The payoff vector at the root node is the payoff associated with this equilibrium.

We need to define a subgame before we can formally define a Subgame Perfect equilibrium. A subgame of an extensive game with game tree  is a game modelled by the subtree under a particular node

is a game modelled by the subtree under a particular node  , ie. a subtree with

, ie. a subtree with  as the root and all its descendants as the other nodes. So the number of subgames induced by a game is equal to the number of nontermminal nodes.

as the root and all its descendants as the other nodes. So the number of subgames induced by a game is equal to the number of nontermminal nodes.

A strategy profile  is a Subgame Perfect Equilibrium of a game if it is a Nash equilibrium of every subgame of the game.

is a Subgame Perfect Equilibrium of a game if it is a Nash equilibrium of every subgame of the game.

Does Subgame Perfect Equilibrium, guarantee the best payoff for both players?

Consider the example in Figure 4. Note that there is an outcome of  that would be better for both the players as compared to the Subgame Perfect Equilibrium. One can find a strategy profile which is at Nash equilibrium and has a payoff of

that would be better for both the players as compared to the Subgame Perfect Equilibrium. One can find a strategy profile which is at Nash equilibrium and has a payoff of  . Consider

. Consider

Neither player can improve his uitlity and the payoff is  . Infact you get another Nash equilibrium with the same payoff if you replace

. Infact you get another Nash equilibrium with the same payoff if you replace

with

with

.

.

However, in a zero sum game, since there cannot be an outcome where both players may improve their pay-offs (if one increases his pay-off, then the pay-off of the other player, by definition, must necessarily decrease) as compared to the Subgame Perfect Equilibrium. It is left as an exercise to the reader to show that Subgame Perfect equilibrium is also the only Nash equilibrium in a zero sum game.

In the remainder of these scribes, we introduce the theory of utility that will continue to be the subject of the next couple of lectures. The model we have studied assumes that each decision-maker is ``rational'' in the sense that he is aware of his alternatives, forms expectations about any unknowns, has clear preferences and chooses his action deliberately after some process of optimization.

Sometimes the decision-maker's preferences are specified by giving a utility function

that maps the set of outcomes to real numbers conserving the preference relation (a complete transitive reflexive binary relation)

that maps the set of outcomes to real numbers conserving the preference relation (a complete transitive reflexive binary relation)  on the set of outcomes. Here, we must have

on the set of outcomes. Here, we must have

if and only

if

if and only

if  .

.

Consider the famous gold and water paradox. The utility of gold is much lower than water, however, the value of gold is much higher than that of water. Why is it the case that a commodity of such low utility is valued so much higher? Informally, one gets the feeling that since the relative abundance of water is much higher than that of gold, the factor of availability must play in somewhere in value-determination. The theory of utility seeks to answer such questions in a formal model.

Another paradox is the following. Suppose I ask you to choose from one of the following options: 1. I give you Rs. 10 with probability 1, or 2. I give you Rs. 70 with probability of 0.5. Which option are you likely to choose? Now consider a scaled variation of this puzzle. I now offer you Rs. 10 crore with probability 1, or on the other hand Rs. 70 crore with probability of 0.5. Which option are you likely to choose now?

Keshav Kunal

2002-08-30

![]() that has as its children, only leaf nodes (Figure 1).

Suppose it is white's turn when the game is in the state corresponding to

node

that has as its children, only leaf nodes (Figure 1).

Suppose it is white's turn when the game is in the state corresponding to

node ![]() . It is clear that once the game reaches this state, then white can

play Rxa8 (move the rook to the square

. It is clear that once the game reaches this state, then white can

play Rxa8 (move the rook to the square ![]() ) and win the game. Hence, we can

label node

) and win the game. Hence, we can

label node ![]() as white, because we know that once the game reaches node

as white, because we know that once the game reaches node ![]() ,

white is sure to win. If at node

,

white is sure to win. If at node ![]() , there are no children with label white, then white would look to draw, and in such a case node

, there are no children with label white, then white would look to draw, and in such a case node ![]() can be labeled draw. Finally, if white can neither win nor draw from the board position at

can be labeled draw. Finally, if white can neither win nor draw from the board position at ![]() , then white is sure to lose and we may label node

, then white is sure to lose and we may label node ![]() as black.

In this manner, we can fold up the finite game-tree and label each node with one of

the tree labels depending on the labels of its children and the player who has her

move.

as black.

In this manner, we can fold up the finite game-tree and label each node with one of

the tree labels depending on the labels of its children and the player who has her

move.

![]() assuming that no pieces are killed. Hence, the number

of nodes in the game tree is extremely large for it to be completely stored in any

practical computer memory or human mind, and so the assumption that players have

complete information about the sequence of moves that have been played, and the

pay-offs at the leaf nodes turns out to be infeasible for the game of chess, which is why it is still interesting.

assuming that no pieces are killed. Hence, the number

of nodes in the game tree is extremely large for it to be completely stored in any

practical computer memory or human mind, and so the assumption that players have

complete information about the sequence of moves that have been played, and the

pay-offs at the leaf nodes turns out to be infeasible for the game of chess, which is why it is still interesting.

![]() of possible histories is finite then the game is

of possible histories is finite then the game is ![]() . If the longest history is finite then the game has a finite horizon. Let

. If the longest history is finite then the game has a finite horizon. Let ![]() be a history of length

be a history of length ![]() ; we denote by

; we denote by ![]() the history of length

the history of length ![]() consisting of

consisting of ![]() followed by

followed by ![]() .

.

![]() , player

, player ![]() chooses an action from the set

chooses an action from the set

![]() chooses a member of

chooses a member of ![]() .

For each possible choice

.

For each possible choice ![]() from this set player

from this set player ![]() subsequently chooses a member of the set

subsequently chooses a member of the set ![]() ; this choice determines the next player to move, and so on. A history after which no more choices have to be made is terminal.

; this choice determines the next player to move, and so on. A history after which no more choices have to be made is terminal.

![]() where

where

![\includegraphics[scale=0.5]{/nfs/megh3/csd98412/mtp/game.eps}](img86.png)

![]() if

players follow edges corresponding to the unique path from the root node to

if

players follow edges corresponding to the unique path from the root node to ![]() .

Further, observe that the progress of the game can be traced out as a unique path

from the root node to one of the leaf nodes. Every move of a player translates

into following an arc from one node to another node.

.

Further, observe that the progress of the game can be traced out as a unique path

from the root node to one of the leaf nodes. Every move of a player translates

into following an arc from one node to another node.

![]() . This payoff can be achieved simply if the players follow the strategies corresponding to the unique path of blue edges from the root to a leaf node.

. This payoff can be achieved simply if the players follow the strategies corresponding to the unique path of blue edges from the root to a leaf node.

![]() is a game modelled by the subtree under a particular node

is a game modelled by the subtree under a particular node ![]() , ie. a subtree with

, ie. a subtree with ![]() as the root and all its descendants as the other nodes. So the number of subgames induced by a game is equal to the number of nontermminal nodes.

as the root and all its descendants as the other nodes. So the number of subgames induced by a game is equal to the number of nontermminal nodes.

![]() that would be better for both the players as compared to the Subgame Perfect Equilibrium. One can find a strategy profile which is at Nash equilibrium and has a payoff of

that would be better for both the players as compared to the Subgame Perfect Equilibrium. One can find a strategy profile which is at Nash equilibrium and has a payoff of ![]() . Consider

. Consider

![]() that maps the set of outcomes to real numbers conserving the preference relation (a complete transitive reflexive binary relation)

that maps the set of outcomes to real numbers conserving the preference relation (a complete transitive reflexive binary relation) ![]() on the set of outcomes. Here, we must have

on the set of outcomes. Here, we must have

![]() if and only

if

if and only

if ![]() .

.